Autonomous Vehicle Development with Jetson Nano and Donkey car

Overview

In this project, we explored autonomous driving using the Jetson Nano platform. Our implementation included lane following with computer vision, GPS-guided follow laps, and dynamic lane switching using OpenCV. This project demonstrates our capability in embedded systems, robotics, and AI.

Introduction

Autonomous vehicles are at the forefront of modern technology. This project focuses on building a scaled-down prototype capable of autonomous navigation using the Nvidia Jetson Nano, leveraging computer vision, GPS, and machine learning techniques.

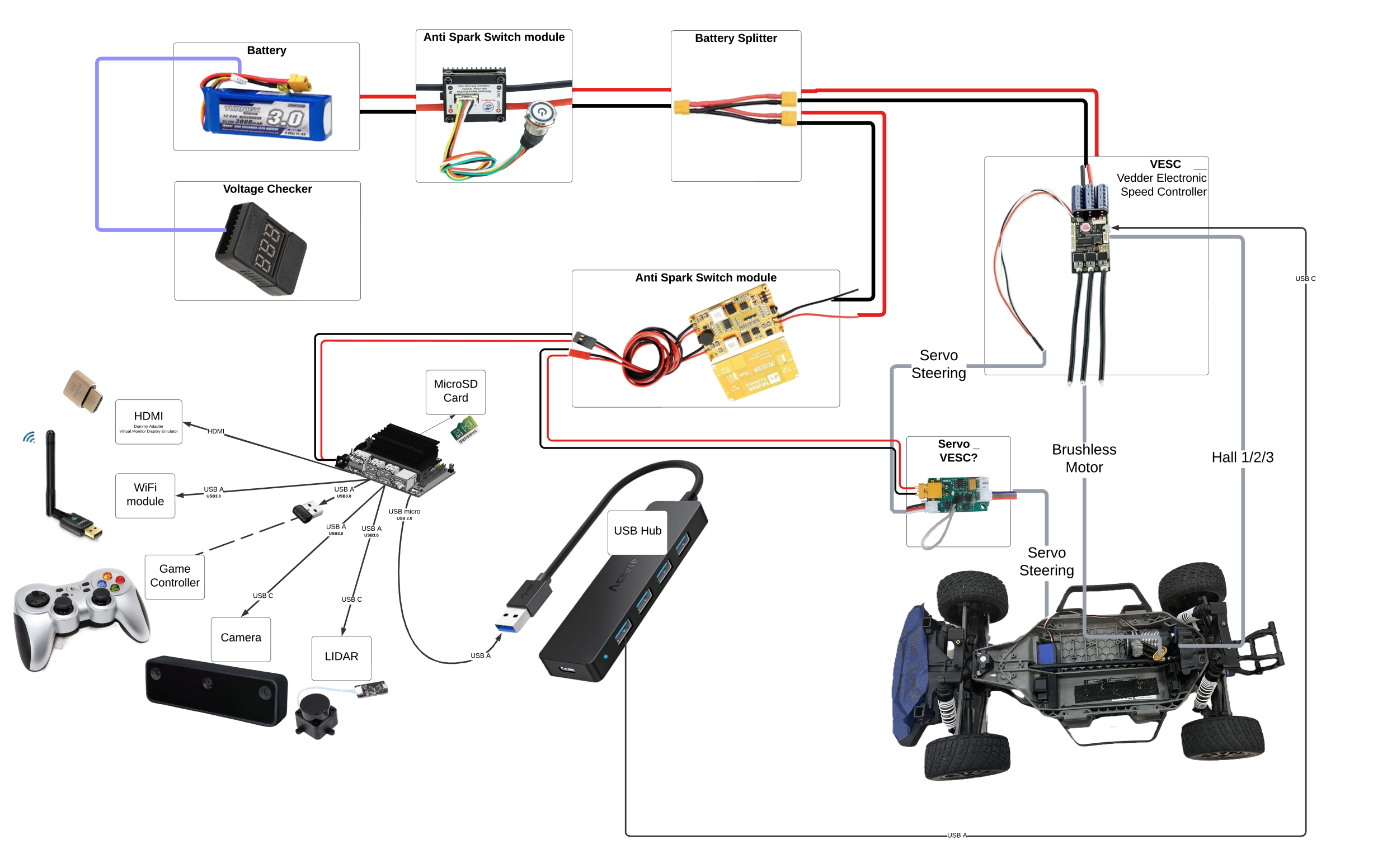

Hardware Setup

- Microcontroller: Nvidia Jetson Nano

- Sensors: USB Camera, GPS Module

- Actuators: Servo motor for steering, ESC for throttle

- Other Components: Wireless relay for emergency stop, LEDs for system feedback

Software Architecture

- Operating System: Ubuntu for Jetson Nano

- Frameworks: OpenCV, ROS2, DonkeyCar AI

- Programming Language: Python

Computer Vision: Lane Following

We implemented lane-following using OpenCV, detecting lane markers through edge detection and color segmentation. This module adjusts the vehicle’s steering dynamically to stay within lanes.

Steps:

- Preprocess the input image (grayscale conversion, Gaussian blur).

- Apply edge detection (Canny).

- Perform Hough Transform to identify lane lines.

- Calculate steering angles using the detected lane position.

GPS Integration: Follow Laps

The GPS module was integrated to record and replay laps. The vehicle followed a pre-recorded path using real-time GPS data to calculate position and make course corrections.

Steps:

- Record waypoints during the initial manual lap.

- Use PID control to minimize cross-track error while following the saved path.

Challenges and Solutions

Challenges:

- Limited GPU Power: The Jetson Nano’s computational capacity required optimizing our OpenCV pipeline.

- Noise in GPS Data: Signal interference led to minor inaccuracies in path following.

- Real-Time Constraints: Achieving low-latency processing for decision-making.

Solutions:

- Used GPU-accelerated OpenCV for efficient processing.

- Implemented a Kalman filter to smooth GPS data.

- Leveraged asynchronous programming to handle real-time inputs.

Results

Our autonomous vehicle achieved:

- Accurate lane detection and following.

- GPS path-following precision within 0.5 meters of the recorded path.

- Smooth and reliable dynamic lane switching.

Future Work

- Integrating LIDAR for obstacle detection.

- Enhancing GPS accuracy with RTK corrections.

- Testing on more complex environments like intersections and urban layouts.

Conclusion

This project exemplifies the fusion of computer vision, embedded systems, and AI to create a functional autonomous vehicle prototype. It is a testament to the potential of accessible hardware and open-source software in solving complex engineering problems.

Acknowledgments Special thanks to UCSD’s ECE & MAE 148 team and our mentor for guidance throughout the project.